This post will introduce KDA Asset Allocation. KDA — I.E. Kipnis Defensive Adaptive Asset Allocation is a combination of Wouter Keller’s and TrendXplorer’s Defensive Asset Allocation, along with ReSolve Asset Management’s Adaptive Asset Allocation. This is an asset allocation strategy with a profile unlike most tactical asset allocation strategies I’ve seen before (namely, it barely loses any money in 2015, which was generally a brutal year for tactical asset allocation strategies).

So, the idea for this strategy came from reading an excellent post from TrendXplorer on the idea of a canary universe–using a pair of assets to determine when to increase exposure to risky/aggressive assets, and when to stay in cash. Rather than gauge it on the momentum of the universe itself, the paper by Wouter Keller and TrendXplorer instead uses proxy assets VWO and BND as a proxy universe. Furthermore, in which situations say to take full exposure to risky assets, the latest iteration of DAA actually recommends leveraging exposure to risky assets, which will also be demonstrated. Furthermore, I also applied the idea of the 1-3-6-12 fast filter espoused by Wouter Keller and TrendXplorer–namely, the sum of the 12 * 1-month momentum, 4 * 3-month momentum, 2 * 6-month momentum, and the 12 month momentum (that is, month * some number = 12). This puts a large emphasis on the front month of returns, both for the risk on/off assets, and the invested assets themselves.

However, rather than adopt the universe of investments from the TrendXplorer post, I decided to instead defer to the well-thought-out universe construction from Adaptive Asset Allocation, along with their idea to use a mean variance optimization approach for actually weighting the selected assets.

So, here are the rules:

Take the investment universe–SPY, VGK, EWJ, EEM, VNQ, RWX, IEF, TLT, DBC, GLD, and compute the 1-3-6-12 momentum filter for them (that is, the sum of 12 * 1-month momentum, 4 * 3-month momentum, 2* 6-month momentum and 12 month momentum), and rank them. The selected assets are those with a momentum above zero, and that are in the top 5.

Use a basic quadratic optimization algorithm on them, feeding in equal returns (as they passed the dual momentum filter), such as the portfolio.optim function from the tseries package.

From adaptive asset allocation, the covariance matrix is computed using one-month volatility estimates, and a correlation matrix that is the weighted average of the same parameters used for the momentum filter (that is, 12 * 1-month correlation + 4 * 3-month correlation + 2 * 6-month correlation + 12-month correlation, all divided by 19).

Next, compute your exposure to risky assets by which percentage of the two canary assets–VWO and BND–have a positive 1-3-6-12 momentum. If both assets have a positive momentum, leverage the portfolio (if desired). Reapply this algorithm every month.

All of the allocation not made to risky assets goes towards IEF (which is in the pool of risky assets as well, so some months may have a large IEF allocation) if it has a positive 1-3-6-12 momentum, or just stay in cash if it does not.

The one somewhat optimistic assumption made is that the strategy observes the close on a day, and enters at the close as well. Given a holding period of a month, this should not have a massive material impact as compared to a strategy which turns over potentially every day.

Here’s the R code to do this:

# KDA asset allocation

# KDA stands for Kipnis Defensive Adaptive (Asset Allocation).

# compute strategy statistics

stratStats <- function(rets) {

stats <- rbind(table.AnnualizedReturns(rets), maxDrawdown(rets))

stats[5,] <- stats[1,]/stats[4,]

stats[6,] <- stats[1,]/UlcerIndex(rets)

rownames(stats)[4] <- "Worst Drawdown"

rownames(stats)[5] <- "Calmar Ratio"

rownames(stats)[6] <- "Ulcer Performance Index"

return(stats)

}

# required libraries

require(quantmod)

require(PerformanceAnalytics)

require(tseries)

# symbols

symbols <- c("SPY", "VGK", "EWJ", "EEM", "VNQ", "RWX", "IEF", "TLT", "DBC", "GLD", "VWO", "BND")

# get data

rets <- list()

for(i in 1:length(symbols)) {

returns <- Return.calculate(Ad(get(getSymbols(symbols[i], from = '1990-01-01'))))

colnames(returns) <- symbols[i]

rets[[i]] <- returns

}

rets <- na.omit(do.call(cbind, rets))

# algorithm

KDA <- function(rets, offset = 0, leverageFactor = 1.5) {

# get monthly endpoints, allow for offsetting ala AllocateSmartly/Newfound Research

ep <- endpoints(rets) + offset

ep[ep < 1] <- 1

ep[ep > nrow(rets)] <- nrow(rets)

ep <- unique(ep)

epDiff <- diff(ep)

if(last(epDiff)==1) { # if the last period only has one observation, remove it

ep <- ep[-length(ep)]

}

# initialize vector holding zeroes for assets

emptyVec <- data.frame(t(rep(0, 10)))

colnames(emptyVec) <- symbols[1:10]

allWts <- list()

# we will use the 13612F filter

for(i in 1:(length(ep)-12)) {

# 12 assets for returns -- 2 of which are our crash protection assets

retSubset <- rets[c((ep[i]+1):ep[(i+12)]),]

epSub <- ep[i:(i+12)]

sixMonths <- rets[(epSub[7]+1):epSub[13],]

threeMonths <- rets[(epSub[10]+1):epSub[13],]

oneMonth <- rets[(epSub[12]+1):epSub[13],]

# computer 13612 fast momentum

moms <- Return.cumulative(oneMonth) * 12 + Return.cumulative(threeMonths) * 4 +

Return.cumulative(sixMonths) * 2 + Return.cumulative(retSubset)

assetMoms <- moms[,1:10] # Adaptive Asset Allocation investable universe

cpMoms <- moms[,11:12] # VWO and BND from Defensive Asset Allocation

# find qualifying assets

highRankAssets <- rank(assetMoms) >= 6 # top 5 assets

posReturnAssets <- assetMoms > 0 # positive momentum assets

selectedAssets <- highRankAssets & posReturnAssets # intersection of the above

# perform mean-variance/quadratic optimization

investedAssets <- emptyVec

if(sum(selectedAssets)==0) {

investedAssets <- emptyVec

} else if(sum(selectedAssets)==1) {

investedAssets <- emptyVec + selectedAssets

} else {

idx <- which(selectedAssets)

# use 1-3-6-12 fast correlation average to match with momentum filter

cors <- (cor(oneMonth[,idx]) * 12 + cor(threeMonths[,idx]) * 4 +

cor(sixMonths[,idx]) * 2 + cor(retSubset[,idx]))/19

vols <- StdDev(oneMonth[,idx]) # use last month of data for volatility computation from AAA

covs <- t(vols) %*% vols * cors

# do standard min vol optimization

minVolRets <- t(matrix(rep(1, sum(selectedAssets))))

minVolWt <- portfolio.optim(x=minVolRets, covmat = covs)$pw

names(minVolWt) <- colnames(covs)

investedAssets <- emptyVec

investedAssets[,selectedAssets] <- minVolWt

}

# crash protection -- between aggressive allocation and crash protection allocation

pctAggressive <- mean(cpMoms > 0)

investedAssets <- investedAssets * pctAggressive

pctCp <- 1-pctAggressive

# if IEF momentum is positive, invest all crash protection allocation into it

# otherwise stay in cash for crash allocation

if(assetMoms["IEF"] > 0) {

investedAssets["IEF"] <- investedAssets["IEF"] + pctCp

}

# leverage portfolio if desired in cases when both risk indicator assets have positive momentum

if(pctAggressive == 1) {

investedAssets = investedAssets * leverageFactor

}

# append to list of monthly allocations

wts <- xts(investedAssets, order.by=last(index(retSubset)))

allWts[[i]] <- wts

}

# put all weights together and compute cash allocation

allWts <- do.call(rbind, allWts)

allWts$CASH <- 1-rowSums(allWts)

# add cash returns to universe of investments

investedRets <- rets[,1:10]

investedRets$CASH <- 0

# compute portfolio returns

out <- Return.portfolio(R = investedRets, weights = allWts)

return(out)

}

# different leverages

KDA_100 <- KDA(rets, leverageFactor = 1)

KDA_150 <- KDA(rets, leverageFactor = 1.5)

KDA_200 <- KDA(rets, leverageFactor = 2)

# compare

compare <- na.omit(cbind(KDA_100, KDA_150, KDA_200))

colnames(compare) <- c("KDA_base", "KDA_lev_150%", "KDA_lev_200%")

charts.PerformanceSummary(compare, colorset = c('black', 'purple', 'gold'),

main = "KDA AA with various offensive leverages")

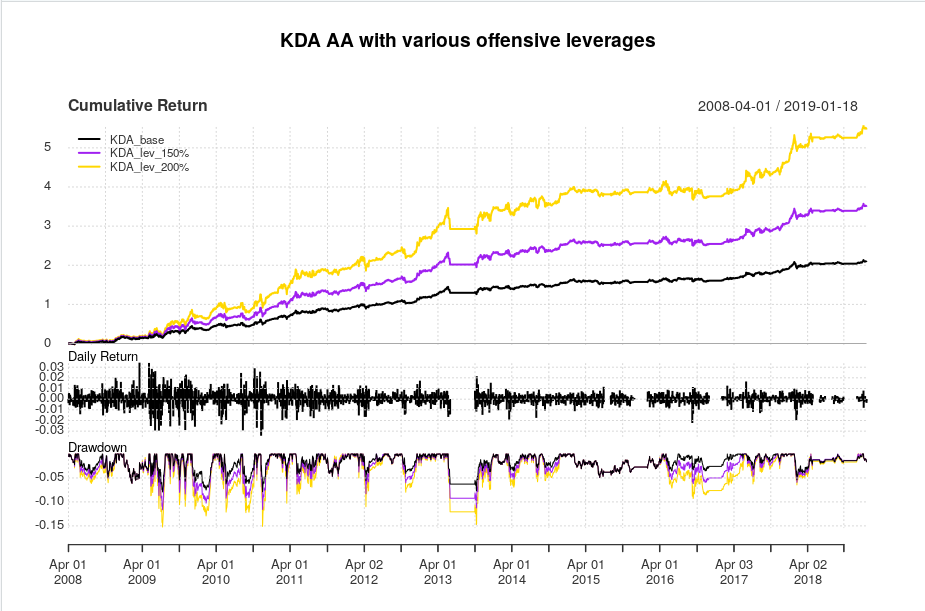

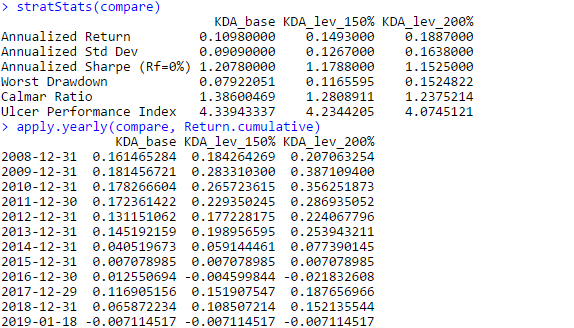

And here are the equity curves and statistics:

What appeals to me about this strategy, is that unlike most tactical asset allocation strategies, this strategy comes out relatively unscathed by the 2015-2016 whipsaws that hurt so many other tactical asset allocation strategies. However this strategy isn’t completely flawless, as sometimes, it decides that it’d be a great time to enter full risk-on mode and hit a drawdown, as evidenced by the drawdown curve. Nevertheless, the Calmar ratios are fairly solid for a tactical asset allocation rotation strategy, and even in a brutal 2018 that decimated all risk assets, this strategy managed to post a very noticeable *positive* return. On the downside, the leverage plan actually seems to *negatively* affect risk/reward characteristics in this strategy–that is, as leverage during aggressive allocations increases, characteristics such as the Sharpe and Calmar ratio actually *decrease*.

Overall, I think there are different aspects to unpack here–such as performances of risky assets as a function of the two canary universe assets, and a more optimal leverage plan. This was just the first attempt at combining two excellent ideas and seeing where the performance goes. I also hope that this strategy can have a longer backtest over at AllocateSmartly.

Thanks for reading.

Pingback: Quantocracy's Daily Wrap for 01/24/2019 | Quantocracy

Really nice works; I have used each of these components, and I like the idea of combining them.

Nicely organized R code.

Thanks!

Thank you for sharing this algo, it seems to be a good fit of several concepts, just as Scott said earlier.

I got some issues running your code, it seems like some library is missing (I guess you also need an individual token for running the API against Quandl as well?)

Error in Quandl(paste0(“EOD/”, symbols[i]), start_date = “1990-12-31”, :

could not find function “Quandl”

Best regards,

Robin

I have not tested yet in R (I am still way too slow in R), but Quandl does require a fee API token passed via the code. Quandl is awesome handshake with R; much faster than I expected.

Oops, apologies. I forgot to update the code to the Yahoo variant. The results are largely similar, but the Yahoo should work for free.

Hi Ilya. Thanks for sharing this.

I am wondering what the offset parameter in below code chunk does (is intended for)? Any examples welcome.

# get monthly endpoints, allow for offsetting ala AllocateSmartly/Newfound Research

ep <- endpoints(rets) + offset

ep[ep < 1] nrow(rets)] <- nrow(rets)

ep <- unique(ep)

I also assume there was (or is) some intention to put some code in the curly brackets below:

if(pctCp == 0) {}

What is the intention of this? Any examples?

And last question: I guess the division by 19 is due to the sum of the weights (12+4+2+1) below?

cors <- (cor(oneMonth[, idx])*12 + cor(threeMonths[, idx])*4 +

cor(sixMonths[, idx])*2 + cor(retSubset[, idx]))/19

Thanks.

Offset: to investigate the effects of not trading directly at month end. EG if you set offset to 10, you trade around the middle of the month instead.

The pctCp==0 is not supposed to be there and does nothing. Removed that. And you are correct regarding dividing by 19.

Thanks for your generosity sharing ideas and codes with us.

After reading your blogs and codes, I have one question.

In the case for leverageFactor = 2, or pctAggressive 0) is 1 or cpMoms are positives for VWO and BND.

For most rowSums(allWts) are equal 2, because the leverage factor is 2.

Then allWts$CASH <- 1 – rowSums(allWts), so 1 – 2 equal -1.

If I read you correct, the cash allocation for most case is -1.

So you mean borrow equal amount of cash or short cash.

Or it really does not matter. Cash has zero returns. 1 or -1 cash has zero return.

Thanks for again for your R codes with great ideas.

It means you’re borrowing cash. That is, you’re leveraging.

Hi Ilya,

Nice combination of interesting ideas and thanks for sharing…

A few questions/ ideas:

1. Have you considered/ tried using a medium or long term vix term structure signal (i.e. vix vs vx3m or vix3m vs vxmt) > 1 as an additional canary signal?

2. Have you tried cleaning the correlation matrix with eigen clipping? I am not sure of the value add in this case given the investment universe is small and intentionally diversified, but I have found it to be valuable when dealing with a large universe of assets with time varying risk factor exposure (i.e. relationship between equities and long term bonds) (see: https://papers.ssrn.com/sol3/papers.cfm?abstract_id=2860037).

Curious to know your thoughts!

Also, happy to share some data/ research on other canary indicators from the bond, swaps, and vol markets.

Best

1) Either you also trade vol, or you’ve been keeping up with my vol strategies. I haven’t considered using vol metrics in tactical asset allocation, though it’d be terrific if someone else can present that idea.

2) Have never used eigen clipping.

3) Thanks for the offer of data, though I’m not sure what I’d do with them.

If I may inquire, how did you come about this data? Do you work at a bank or hedge fund?

1) Tested it and it works well when you do a classification on the instruments with the high downside beta to the vix term structure filter.. Some other canary indicators based on data available fro FRED also work quite well and have low signed correlation with the instruments in the canary basket.

2) Cleaning the correlation matrix makes a meaningful difference when the number of instruments / days in the lookback period to estimate the matrix approaches 1.

3) I work for a fund

Happy to share. Just lmk.

Would love to see the link to your code. And which fund, if I may inquire?

Hi Ilya,

Thanks for sharing your code.

When I run your code in Rstudio (1.0.136) I get the following errors:

> KDA_100 KDA_150 KDA_200

> # compare

> compare colnames(compare) <- c("KDA_base", "KDA_lev_150%", "KDA_lev_200%")

Error in colnames(compare) charts.PerformanceSummary(compare, colorset = c(‘black’, ‘purple’, ‘gold’),

+ main = “KDA AA with various offensive leverages”)

Error in inherits(x, “xts”) : object ‘compare’ not found

Any idea what is causing this? Is it a version issue?

Thanks,

John

As you see, the compare object is just the 3 return streams cbinded to each other.

Did you ever figure out how to fix this error?

Well, you need to actually create the compare object.

Installing the “testthat” package somehow made it work for me. Thank you.

Ilya,

Nice coding/algo; thanks for sharing it with the write-up. I was able to back-test with a longer history to 1997 simply by back-extending the ETFs with corresponding and well-correlated mutual funds that have longer EOD histories, the results were very similar; if interested, you can find more info on the approach here: https://systematicinvestor.wordpress.com/category/r/page/8/

I do have 1 observation and wondered if you had any suggestions on how to improve the robustness of the date sensitivity as there’s quite a fall-off either side of end-of-month by a day. Can you suggest a hint or two on how to modify the code to support weekly trading instead of monthly with a choice of which day of the week; Friday or Monday for instance? Thanks!

I fixed the code, so you can just set the lag by an offset. And yeah, I’ve found that you do get a drop-off trading anytime after the end of the month (and it ramps up in the last week). Really makes one wonder about the efficacy of the filter.

Thanks for the reply. I managed to tweak your algorithm to judge how weekly and quarterly results would fare were far worse versus literally end-of-month. Keep up the excellent work you share here; am always interested in strategies that yield decent CAGR with low daily max drawdown!

Hi Ilya,

Thank you for your excellent code and accompanying notes. I note looking at “allWts” there are months where there are negative weights. Does that imply that the algo has shorted that ETF for that month? Thanks

Pingback: Asset Allocation Roundup - AllocateSmartly

Pingback: Ilya Kipnis' Defensive Adaptive Asset Allocation - AllocateSmartly

For leverage, do you use leveraged ETFs or broker margin?

Are the financing costs included in the simulation? What interest rate do you use?

Thank you very much and congratulations for this fantastic work

I assume leveraged ETFs exist. Costs not included but can be easily included. Interest rates should be fairly negligible.

I like that you don’t complicate the code excessively. The transaction costs and interest rates can be neglected without degrading the result.

They can be added later, if parties are interested in them.

Can I levered the crash protection Asset (IEF) ?

No, since you can only employ leverage when you’re fully in risk assets.

IB margin’s or leveraged etfs? Thanks

Leveraged ETFs if they exist.

Leverage Rebalance monthly?

Yes

Thanks Mr Kipnis for sharing your work, as usual… and help increase my knowledge in R

This seems to be a very interesting TAA.

One question : How do we get the historical monthly allocation of the different assets ?

Thanks a lot

Jean Christophe

Use verbose = TRUE on the Return.portfolio function, and then call the names function on your output. EG out <- Return.portfolio(returns, weights, verbose = TRUE); names(out) and check the beginning of period object (should be called something like BOP.weights)

Thanks for such a great strategy/concept and apologize for my ignorance on how to properly use R. Assuming one runs the script above in RStudio, what exactly does one type to receive the latest recommended allocation based on the most recently ended month?

I tried multiple variations of the Return.portfolio function but I just get various errors such as “Error in checkData(weights, method = “xts”)”. Any insight would be hugely appreciated. Many thanks!

Hello Ilya,I see you are assuming leveraging with no interests, is it right?

No interest on leverage.

Thank you for the post and detailed R code. My question applies more broadly to the canary universe approach for asset allocation. It is fairly straightforward – in the Keller / Keunig canary universe paper, they describe being able to test this methodology dating back to at least 1970. As far as I know, VWO / Emerging market equities as an asset class only have data going back to 1990 at the earliest. Am I losing my mind here? How could you test the VWO / BND canary set dating back to 1970? Just wondering if I am missing something. Thank you!

Indices from proprietary data vendors.

Hello, hope you are doing well!

First of all, I would like to thank you for your post and detailed instructions.

I have a question: what can be done when the number of assets is different than 12? I am trying to apply this code using different assets, but I keep getting the following error:

Error in solve.QP(Dmat, dvec, Amat, bvec = b0, meq = 2) :

NA/NaN/Inf in foreign function call (arg 1)

Called from: solve.QP(Dmat, dvec, Amat, bvec = b0, meq = 2)

If you could help me out, I will be very grateful.

Check lines 71 and 72.

I changed all the lines in which the number of assets need to be specified, those being the lines you mentioned as well as lines 52, 53 and 134.

However, I still get the same error and I am not sure of what could be the reason behind this.

I am using 10 assets in total, 2 of them are the cash protection assets.

Hello Mr. Kipnis

I’m an absolute beginner and I don’t know how to figure out the current proposed asset allocation. What can I type in to see it? I’m using Rstudio. I think right now its 100% cash.

thanks for your work

Check the latest weights.